K-decay: A New Method For Learning Rate Schedule

Alright, let's talk about something that might make your eyes glaze over quicker than a poorly rendered GIF: learning rate schedules in machine learning. Now, I know what you're thinking. "Learning rates? Schedules? Isn't that just for the super-nerdy, coffee-fueled folks hunched over glowing screens at 3 AM?" Well, yes and no. It's a bit like figuring out how much sugar to put in your coffee. Too little, and it's bitter. Too much, and it's… well, undrinkable. And just like your perfect coffee blend, finding the right "schedule" for your machine learning model to learn can be a real puzzler.

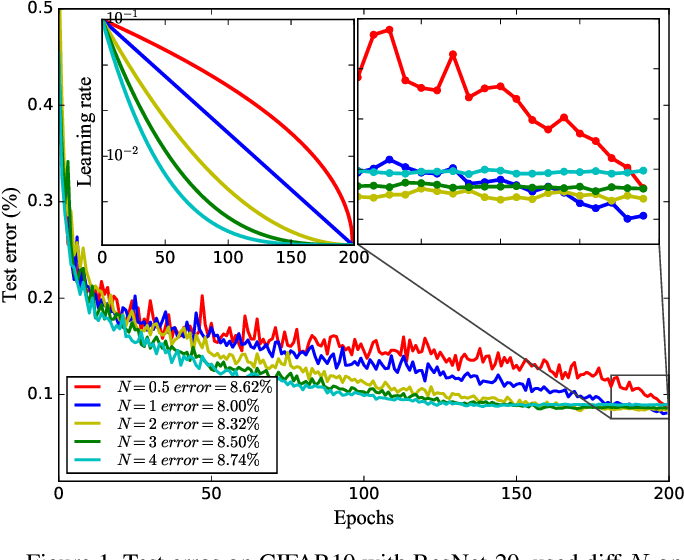

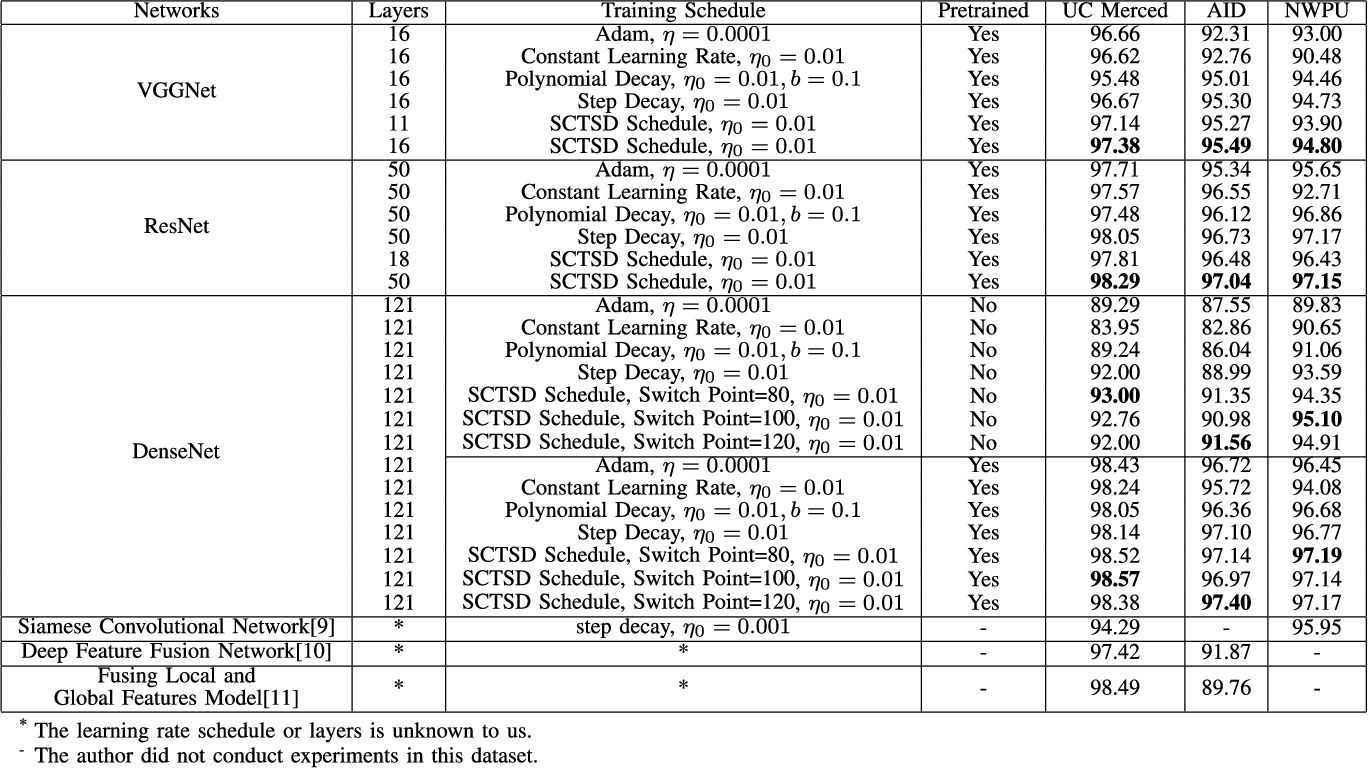

For ages, we've been playing with these things called step decay, exponential decay, and all sorts of other fancy-sounding methods. They're like the classic recipes for baking a cake. You follow the instructions, add the ingredients in the right order, and hope for the best. Sometimes you get a masterpiece, and sometimes… well, let's just say the smoke alarm gets a good workout. And then there’s the sheer terror of picking the right numbers. Is it 0.1 or 0.01? Is the drop every 10 epochs or 20? It's enough to make you want to go back to drawing with crayons.

But here’s where things get interesting. Imagine a new kid on the block, a method that’s shaking things up. It’s called K-decay. Now, before you start picturing a secret agent or a superhero, it's just a name. But the idea behind it? That’s where the fun begins.

Think of it this way: with the old methods, it's like you're setting a strict diet for your model. "Okay, you get this much energy today, and tomorrow you get a little less, and the day after that, even less." It’s all very planned out. But what if your model is having a growth spurt? Or what if it suddenly decides it needs a cheat day? The old schedules don't really account for that. They’re rigid. They’re like your grandma insisting on eating boiled cabbage every single day.

K-decay, on the other hand, is a bit more… flexible. It's like that friend who's always up for trying new things. It doesn’t stick to a rigid, pre-determined plan. Instead, it’s more responsive. It listens to what the model is doing. It's like asking your model, "Hey, buddy, how are you feeling about this learning thing today?" And the model might say, "You know, I'm feeling pretty good, maybe I can handle a little more learning!" or "Whoa there, partner, I'm feeling a bit overwhelmed. Let's slow down a tad."

This might sound a bit too… intuitive for some. I mean, we’re talking about algorithms here, not therapy sessions. But isn't that what we're trying to do? We're trying to guide these complex systems to learn effectively. And sometimes, being a little less like a drill sergeant and a little more like a supportive coach can go a long way.

The beauty of K-decay is that it tries to be smarter about when and how much to adjust the learning rate. It's not just about blindly dropping the rate at certain intervals. It's about making more informed decisions. It’s like realizing your cake isn’t rising evenly and deciding to move it to a different rack in the oven, instead of just sticking to the recipe’s exact instructions for temperature and time regardless of what’s happening.

Now, I’m not saying K-decay is going to magically solve all your machine learning woes. There’s no silver bullet in this field, unfortunately. But it’s a breath of fresh air. It’s a different way of thinking about a fundamental part of the training process. It’s a departure from the "set it and forget it" mentality that can sometimes plague our experiments. Instead, it encourages a more dynamic interaction between us and our models.

"It's like the difference between a pre-programmed robot and a really smart puppy. One just follows orders, the other learns and adapts."

And let's be honest, the old ways can feel a bit like a hamster wheel. You’re just running and running, and you hope you’re getting somewhere. With K-decay, there’s a sense of progress, of real adaptation. It’s about getting more bang for your computational buck. It’s about helping your model learn more efficiently, which, let’s face it, saves everyone time and sanity.

So, next time you're wrestling with a learning rate schedule, and you feel that familiar urge to just throw your hands up in despair, consider giving K-decay a whirl. It might just be the little nudge your model needs to truly shine. It’s a bit of an "unpopular opinion" to champion a newer method over the tried-and-true, but sometimes, the new kids on the block are exactly what we need to make things a little easier, and a lot more entertaining. And who doesn’t want more entertaining learning, right?