Confidence Intervals And The Within-the-bar Bias

Hey there, sunshine seekers and data dabblers! Ever feel like the world’s a giant, slightly wobbly buffet of information, and you’re trying to pick out the tastiest bits? Well, pull up a comfy chair and let’s chat about something that sounds a little fancy but is actually as down-to-earth as your favorite pair of jeans: Confidence Intervals. And while we’re at it, we’ll peek behind the curtain at a sneaky little trickster called the Within-the-Bar Bias. Think of this as your cheat sheet to understanding how we can be mostly sure about things, without getting bogged down in the statistical nitty-gritty. It’s like knowing your favorite barista makes a really good latte, most of the time.

So, what’s a confidence interval, you ask? Imagine you’re trying to guess the average height of all the golden retrievers in your neighborhood. You can’t possibly measure every single one, right? That would be… a lot of slobbery hugs. Instead, you pick a sample. Maybe you measure ten golden retrievers at the park. Let’s say their average height is 23 inches. Now, is 23 inches the exact average height of all golden retrievers? Probably not. But it’s a pretty good guess!

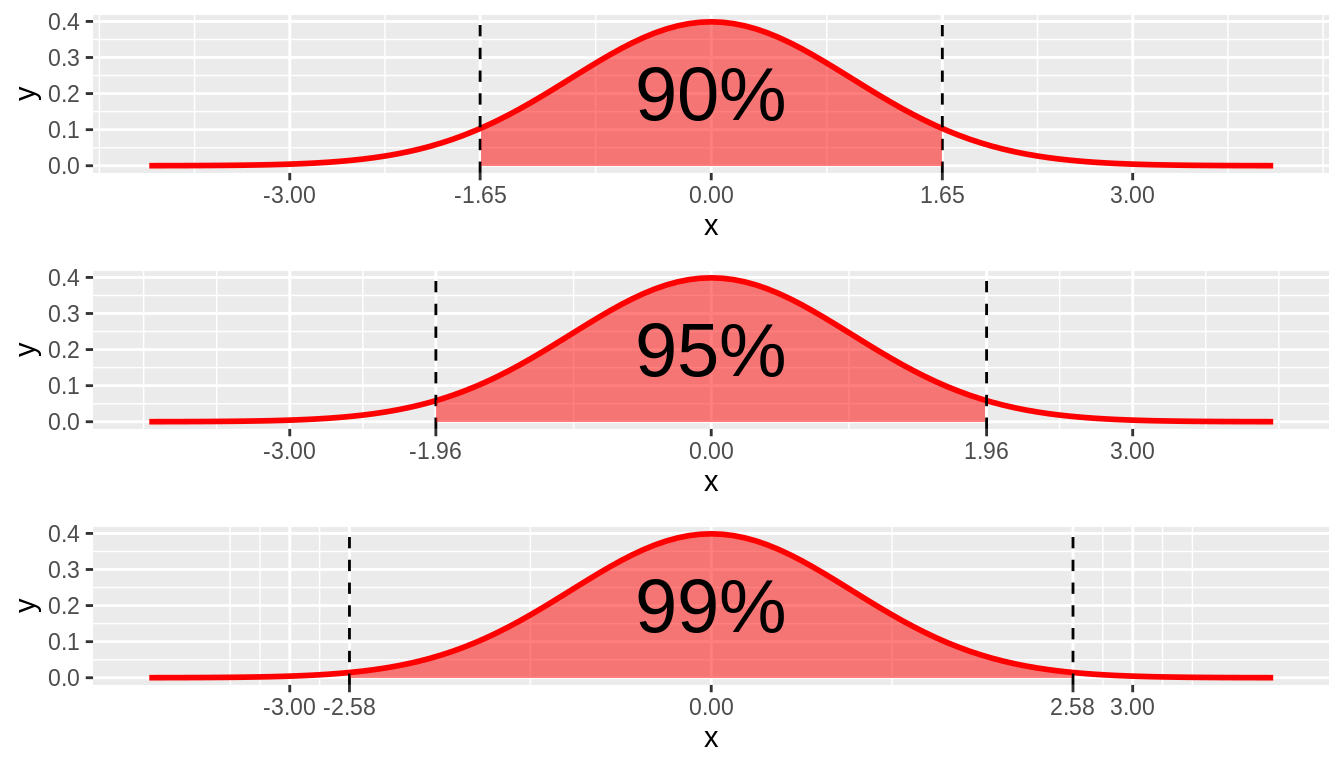

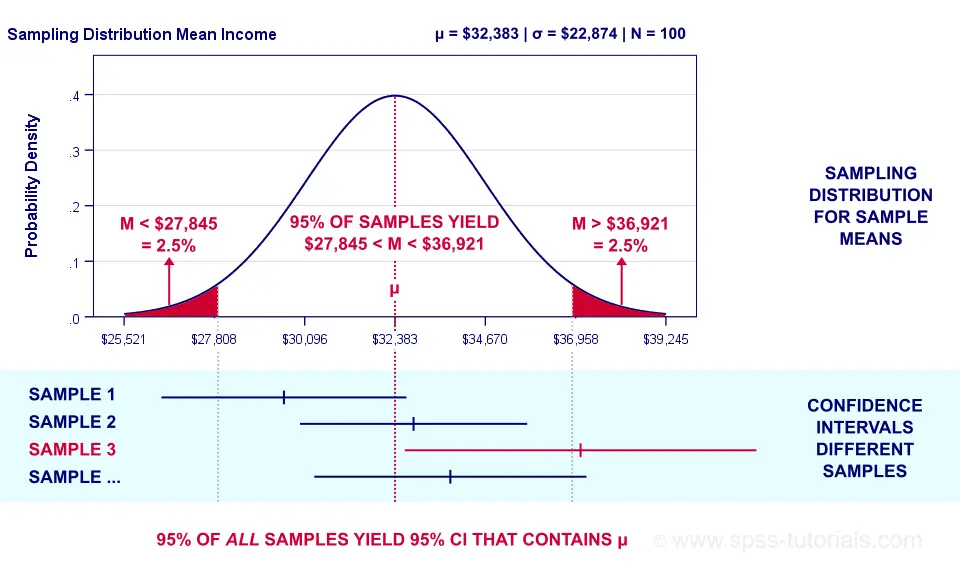

A confidence interval takes that guess and gives it a bit of breathing room. Instead of saying "the average height is 23 inches," we say something like, "we are 95% confident that the true average height of all golden retrievers in the neighborhood is between 22 and 24 inches." See? It’s not a single number, but a range. This range is your confidence interval. It acknowledges that there's a little wiggle room, a bit of uncertainty, because you only looked at a sample, not the whole darn population.

Think of it like this: you're not claiming to have captured the exact essence of your grandma's secret cookie recipe, but you've got a pretty solid batch that captures the spirit of those cookies. It’s that comforting feeling of "I'm pretty sure about this." The "95% confident" bit is key. It means that if you were to repeat this sampling process many, many times, 95% of the confidence intervals you calculated would contain the true average height. It’s about the reliability of the method, not a guarantee for your specific result. It’s like saying, "This recipe works 95% of the time to get me those nostalgic cookie vibes."

Why is this so useful? Because in the real world, we’re always dealing with samples. Think about polls during election season. They don't ask everyone their vote! They ask a representative sample and then give you a margin of error. That margin of error is basically the width of their confidence interval. It tells you how much the results might vary from the actual outcome. It's the statistical equivalent of saying, "Okay, the polls say Candidate A is leading, but it could be by a hair or by a decent margin. We're pretty sure they're ahead, but not absolutely certain."

This concept pops up everywhere, from scientific research to marketing reports. When a drug company says a new medication is "90% effective," they're usually implying a confidence interval. They're not saying it will work for 90% of people with 100% certainty, but that their study suggests a high probability within a certain range. It’s the difference between absolute truth and a really, really strong indication.

Now, let’s talk about the flip side, the sneaky little saboteur in our quest for certainty: the Within-the-Bar Bias. This one is a bit more about how we present and interpret data, especially visual data like charts and graphs. Imagine you're at a trendy bar, and they've got a cool infographic showing the popularity of different craft beers. The beers with the highest sales are represented by tall, impressive bars. Everything looks great, right?

The Within-the-Bar Bias is the tendency to focus only on the information within those prominent bars and overlook what's outside of them, or even what's implied by their height. It's like only looking at the delicious-looking cocktails at the top of the menu and forgetting about the perfectly good, less flashy options at the bottom, or the fact that the menu is organized by price, not by taste.

Let’s break this down with an example. Suppose you see a bar chart showing customer satisfaction scores for different products. Product A has a bar reaching 8.5 out of 10, and Product B has a bar at 7.0 out of 10. At first glance, Product A is a clear winner. You might immediately think, "Wow, Product A is significantly better!" This is where the Within-the-Bar bias can kick in. You're focusing on the height of the bars and the difference they represent.

But what if the confidence intervals for these scores were very wide? What if the confidence interval for Product A was 7.5 to 9.5, and for Product B it was 6.0 to 8.0? In this scenario, the intervals actually overlap. This overlap means that while the sample average for Product A is higher, there's a good chance that the true average satisfaction for Product B could actually be higher than the true average for Product A. The bars look different, but the underlying data, when considering the uncertainty (our confidence intervals!), tells a less definitive story.

The Within-the-Bar Bias is our brain’s shortcut. It’s easier to compare the tops of the bars than to mentally overlay confidence intervals. It's like our eyes are drawn to the brightest, shiniest objects and we don't always dig deeper. Think about social media trends. We see a lot of people posting about a new dance challenge, and we might assume everyone is doing it. We're focusing on the visible "bar" of popularity and might overlook the vast majority who aren't participating.

This bias can be particularly tricky when dealing with proportions or percentages. Imagine a news report showing that a certain policy is supported by 60% of people in one city, and 55% in another. The immediate thought is that the first city is much more supportive. But if the confidence intervals are wide, say 55-65% for the first city and 50-60% for the second, the difference might not be statistically significant. The "bars" look different, but the underlying populations might be more similar than we think.

It’s like looking at a photograph of a crowd. You see a few people waving enthusiastically, and you might infer that the whole crowd is ecstatic. But the camera might have missed the quiet folks in the back, or the ones who are just politely clapping. The visible "bars" of enthusiasm don't tell the whole story.

So, how do we fight this sneaky bias and appreciate the nuance of confidence intervals? It’s all about adding a little bit of critical thinking and visual detective work to our daily dose of information consumption. When you see a chart or a statistic, especially one designed to impress or persuade, ask yourself a few gentle questions:

Practical Tips for Navigating the Data Jungle:

- Look for the Wiggle Room: If possible, see if the source of the information provides confidence intervals or margin of error. If they don't, be extra cautious. A single number presented as absolute truth in a complex situation is often a red flag. Think of it like getting a single, definitive answer to "Will it rain tomorrow?" when the weather forecast is always presented with probabilities.

- Don't Just Compare Tops: When looking at bar charts, mentally (or literally!) sketch in those potential confidence intervals. Do the bars' ranges overlap significantly? If they do, the difference might be less pronounced than it appears. Imagine those beer bars at the pub. If the "customer enjoyment" bars overlap a lot, it means the difference in taste experience might be pretty subtle.

- Consider the Sample Size: Generally, larger sample sizes lead to narrower confidence intervals (less wiggle room). If a study has a tiny sample, be extra skeptical of any strong conclusions. It's like trying to get a sense of a whole party by only talking to two people.

- Beware of "Significant" Claims: Just because a difference is statistically "significant" doesn't mean it's practically important or meaningful in everyday life. A study might find a statistically significant difference in the average lifespan of two types of widgets, but if it’s only a fraction of a second, who cares?

- Embrace Uncertainty: Real life is rarely black and white. Confidence intervals remind us that most knowledge is probabilistic. It's okay not to have absolute certainty about everything. Think of it as the joy of discovery, where you're refining your understanding rather than reaching a final, unchangeable verdict.

- Context is King: Always consider the context. Who is presenting the data? What is their agenda? Are they trying to sell you something, convince you of a political viewpoint, or share genuine findings? A charismatic presenter might make a weak statistical argument look very strong. It’s like a magician distracting you with a flourish while they hide the rabbit.

Think about your own experiences. When you read a product review, do you just look at the star rating? Or do you read a few comments to get a sense of the range of opinions? That’s you, intuitively using confidence intervals and avoiding the Within-the-Bar Bias!

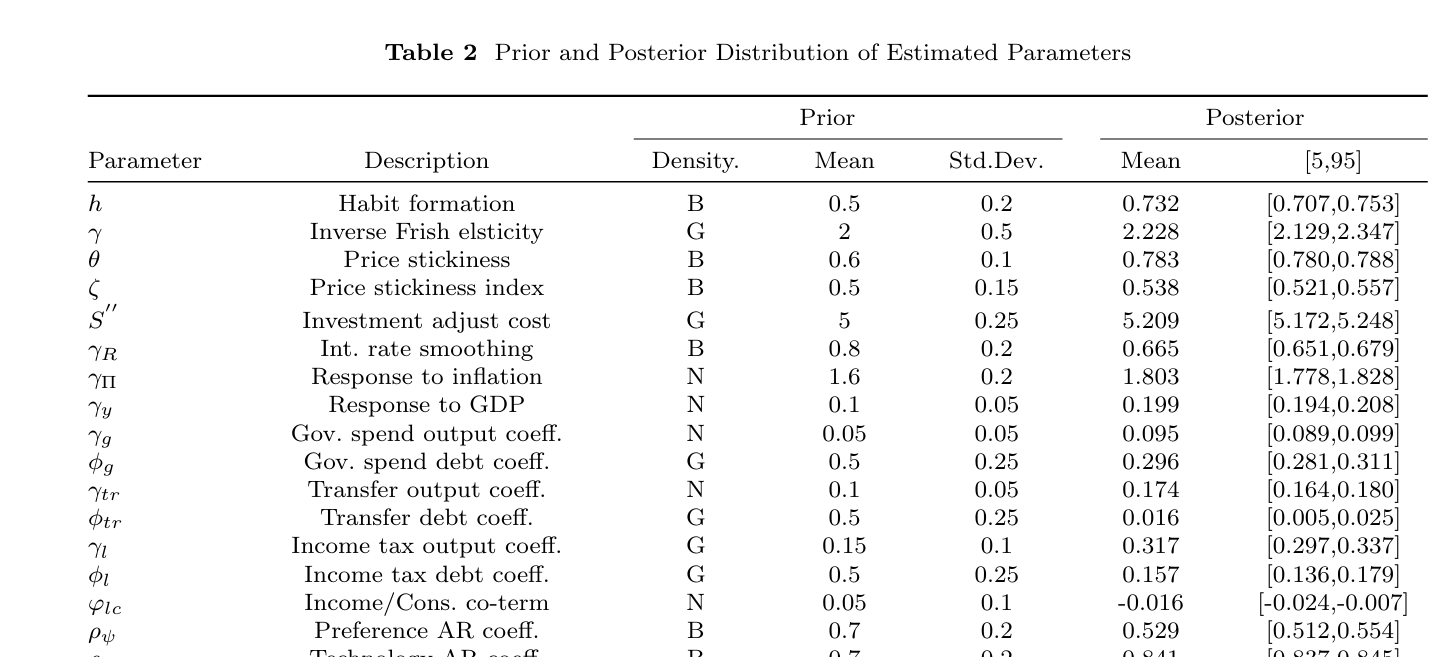

Let's take a fun little detour. Did you know that the term "confidence interval" was popularized by statistician Jerzy Neyman in the 1930s? He was all about making statistical inference accessible, which is pretty cool. And that "95%" we keep talking about? It's not a magical number; it’s just a convention that's proven useful. You could technically calculate a 90% or a 99% confidence interval, but 95% is the sweet spot for many applications, balancing certainty with practicality. It’s like choosing the perfect temperature for your morning coffee – not too hot, not too cold, just right.

So, next time you see a graph or hear a statistic, take a moment. Don't just be wowed by the height of the bar or the conviction in the speaker's voice. Ask about the range. Consider the uncertainty. Understand that most of the time, we're working with pretty good guesses, and that’s perfectly okay. It’s the statistical equivalent of knowing your friend is generally reliable, even if they’re sometimes a few minutes late.

In our daily lives, this means being a little less prone to jumping to conclusions. When someone tells you "everyone loves this new trend," pause. Is that really everyone, or just the vocal few whose opinions are loudest? When you see a headline claiming a breakthrough, consider the source and if there’s more to the story than the boldest claim. It's about cultivating a healthy skepticism that’s balanced with an openness to learn and understand.

Ultimately, confidence intervals and understanding biases like the Within-the-Bar bias are about helping us make better, more informed decisions. They’re not about becoming a statistics guru overnight, but about developing a more nuanced way of looking at the world. It's about appreciating that most things are shades of grey, and that’s where the real richness of understanding lies. So, go forth, be confident in your uncertainty, and keep those mental confidence intervals wide open!