Code.org Lesson 13 Other Forms Of Input Answers

Hey there, fellow curious minds! Ever wondered what makes your favorite apps and websites do things? Like, how does a game know when you tap the screen, or how does a voice assistant understand your mumblings? Well, today we're diving into the super cool world of how computers get information, specifically with a peek at Code.org Lesson 13: Other Forms of Input. No need to be a coding wizard for this; we're keeping it totally chill and exploring the "how" behind the magic!

Think of a computer like a really smart, but slightly clueless, helper. It's amazing at following instructions, but it needs us to tell it what to do and what's happening around it. That's where "input" comes in. We've probably all messed around with typing on a keyboard or clicking a mouse, right? Those are the classic ways we give a computer information. But what if there are other, more exciting ways?

Code.org's Lesson 13 gets us thinking beyond the keyboard and mouse. It’s like opening up a treasure chest of possibilities for how we interact with technology. They’re asking us to consider, "What else can we use to tell a computer what’s going on?" It’s a question that unlocks a whole universe of interaction, and honestly, it's pretty mind-blowing when you start to think about it.

So, What's the Big Deal About "Other Forms of Input"?

You might be thinking, "Okay, input, got it. Keyboard, mouse. What's the fuss?" Well, the fuss is that technology is getting way more integrated into our lives, and we're not always sitting at a desk with a keyboard. Think about your smartphone. You tap, sure, but you also swipe, pinch, and sometimes even talk to it! Your smart speaker listens to your voice. Your fitness tracker senses your movement. These are all examples of "other forms of input" in action.

Lesson 13 is all about breaking down these different ways computers gather information. It's not just about the device you're using, but the type of signal or data it's picking up. Imagine you're trying to explain something to someone. You could write it down (keyboard), point to something (mouse), or you could speak it (voice input) or even use gestures (motion input). Computers are learning to understand all these different "languages" too.

Let's Get Specific: What Are These "Other Forms"?

Code.org, in their wonderfully accessible way, introduces us to some fantastic examples. One of the coolest ones is sound input. Yep, that's your voice! Think about when you say "Hey Google" or "Siri." The device is picking up the sound waves from your voice, converting them into data, and then (with some seriously complex processing) trying to figure out what you mean. It's like teaching a parrot to understand sentences instead of just mimicking words. Pretty neat, huh?

Then there’s motion input. This is where things get really fun and active. Think about video games where you swing a tennis racket in real life and your character on screen does the same. Or how your phone might detect if you've turned it sideways. These devices have sensors that can detect movement, acceleration, and orientation. It’s like giving the computer a sense of its own physical state, or your physical actions, without you having to press a button. It’s the difference between telling someone to move and them actually seeing you move.

Another interesting one is image input. This is how cameras work. They capture light and turn it into a digital picture. This is the magic behind facial recognition on your phone, or how self-driving cars "see" the road. It’s like giving the computer eyes! And not just eyes that see, but eyes that can process and understand what they're looking at. Imagine teaching a robot to identify different types of flowers just by looking at them. That's image input at play.

There are even more nuanced forms, like touch input on screens, which goes beyond just a simple click. We're talking about pressure sensitivity (like on some drawing tablets) or multi-touch gestures (like pinching to zoom). It’s like the computer is not just feeling your finger, but feeling the way your finger is interacting with it. This makes for much more intuitive and expressive interfaces. It’s the difference between a blunt poke and a delicate caress.

Why is This So Important (and Fun!)?

Understanding these different forms of input is fundamental to understanding how we can make technology more accessible and more powerful. It’s about breaking down barriers. If someone can't easily use a keyboard, maybe they can use their voice. If a visual interface is tricky, perhaps motion control is the answer.

Plus, it opens up a world of creative possibilities! Imagine building an interactive art installation that responds to the sounds around it, or a game that you control by dancing. These are all made possible by understanding and utilizing these diverse input methods. It’s like a composer learning to use a whole new orchestra of instruments, each with its own unique sound and capability.

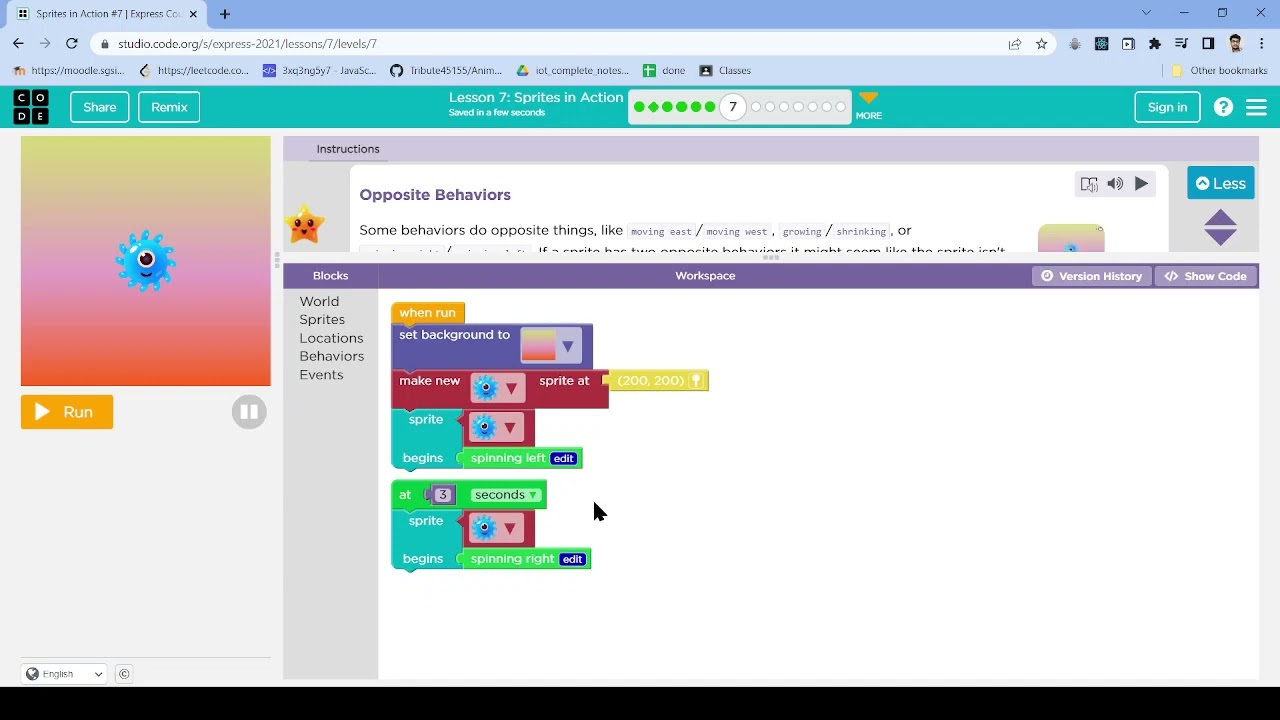

Lesson 13 on Code.org often presents this information in a way that encourages experimentation and play. They might ask you to think about how you would design an app that uses these inputs. For instance, how would you create a "Simon Says" game using voice commands? Or how could you use motion to control a character in a simple maze? These aren't just abstract concepts; they're invitations to create!

Connecting the Dots: From Lesson to Real World

When you’re playing a game on your tablet, and you tilt it to steer, that’s motion input. When you ask your smart speaker for the weather, that’s sound input. When you unlock your phone with your face, that’s image input. These aren’t futuristic concepts; they’re part of our everyday digital lives!

Code.org does a brilliant job of taking something that could seem quite technical and making it relatable. They’re not just teaching you what these inputs are, but why they matter. They're encouraging you to think like a designer and a problem-solver. They want you to see the world of computing not just as code on a screen, but as a dynamic, interactive system that responds to us in countless ways.

So, next time you’re using your phone, your smart speaker, or playing a game that uses your movement, take a moment to appreciate the "other forms of input" at play. It’s a testament to how far technology has come, and a tantalizing glimpse into where it’s going. It’s all about making technology more intuitive, more responsive, and frankly, a whole lot more fun. Keep exploring, keep questioning, and who knows, maybe you’ll be designing the next amazing input experience!