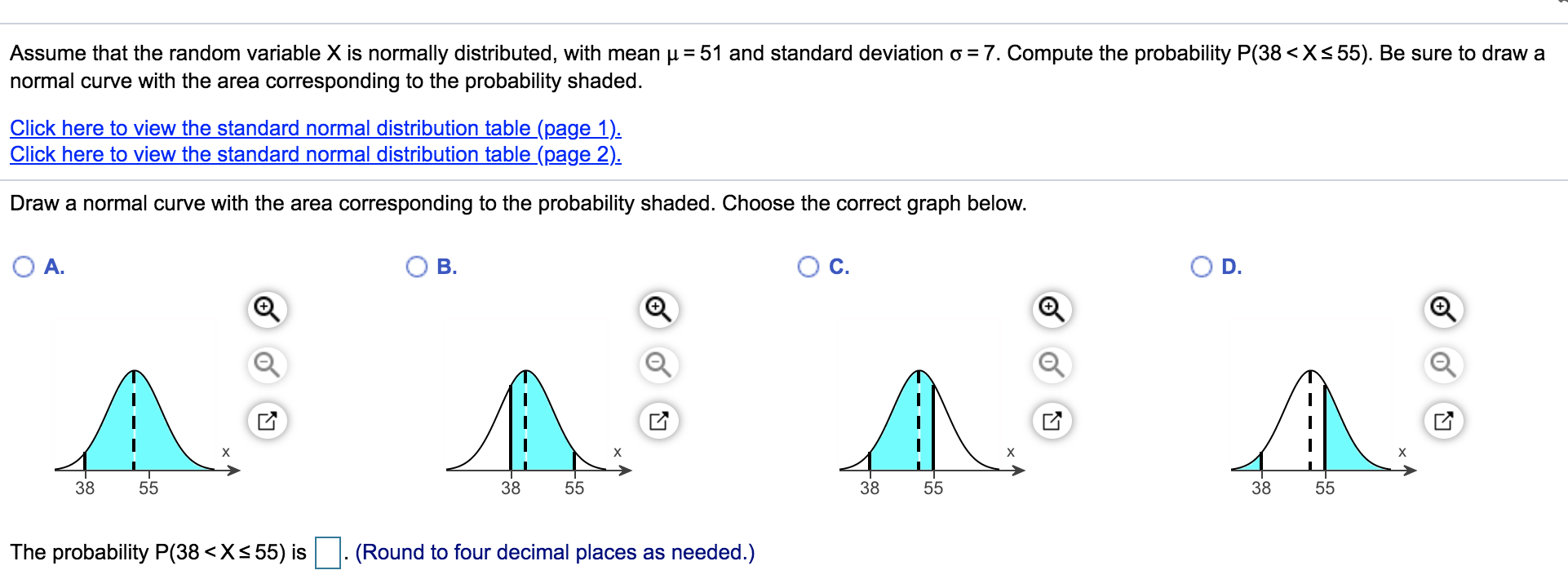

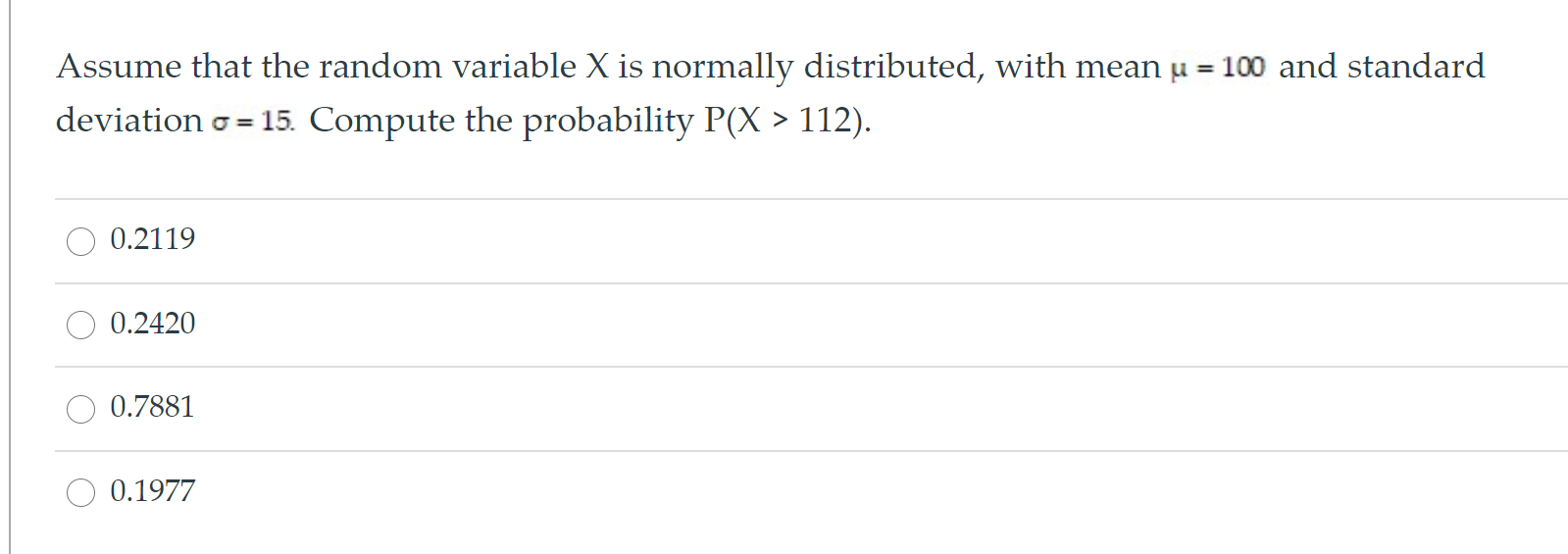

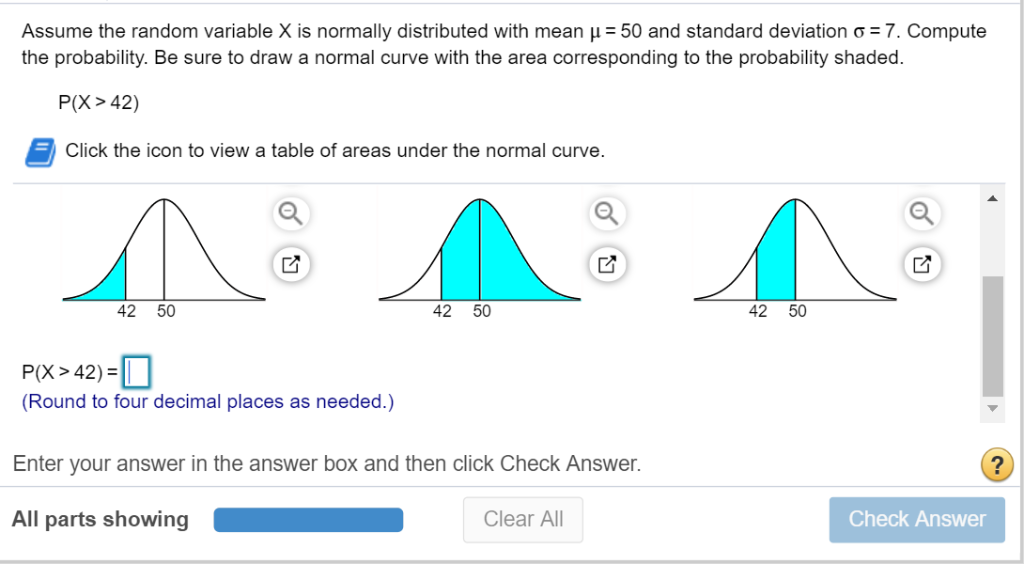

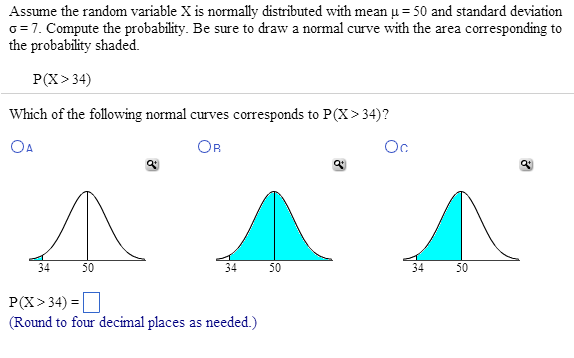

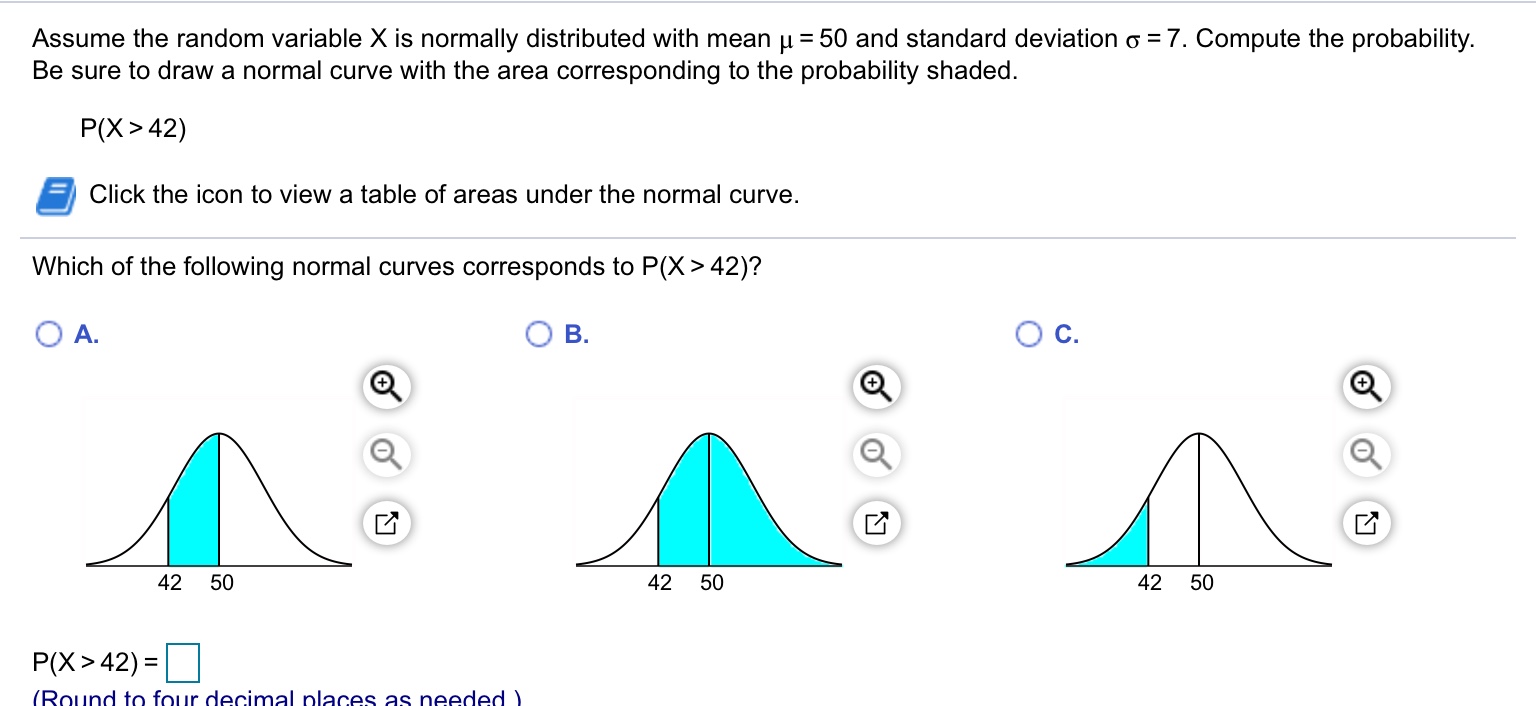

Assume That The Random Variable X Is Normally Distributed

You know, I was just thinking the other day about how much we rely on averages. Like, my friend Brenda was telling me about her garden. She’s obsessed with growing the biggest tomatoes. And she’s been meticulously measuring them every year, creating these elaborate spreadsheets. Her goal, she proudly declared, is to beat last year’s average tomato weight. Bless her heart. It got me wondering, though… what if the tomatoes themselves, in their infinite wisdom, have their own idea of what an "average" should be?

Seriously though, it’s a funny thought. We humans love our neat little boxes and our summaries. We take a whole bunch of things – heights, test scores,… well, tomato weights – and we boil them down to a single number: the average. And often, that average is pretty darn useful. But what if there's more to the story than just that one number? What if the spread of those numbers, how they clump together or fan out, tells us something just as important, if not more?

This is where we stumble, or rather, gracefully land, into the land of the Normal Distribution. And before you click away thinking "Oh no, not another math thing!", stick with me. It's actually a concept that pops up everywhere, and once you get it, you’ll start seeing it in the most unexpected places. It’s like getting a secret decoder ring for the universe.

So, let’s pretend, just for a moment, that our friend Brenda’s tomatoes aren’t just randomly growing. Let’s imagine that the weight of each individual tomato, if we were to measure a truly massive number of them, would naturally fall into a specific pattern. And this pattern, this distribution of weights, looks suspiciously like a bell. Yep, a classic bell curve.

This, my friends, is the heart of the idea: Assume That The Random Variable X Is Normally Distributed. What does that even mean? Well, let’s break it down, slowly and without any intimidating jargon. Imagine X is our random variable. It could be the height of a randomly selected person, the score on a standardized test, the amount of time it takes for a lightbulb to burn out, or, you guessed it, the weight of a tomato from Brenda’s garden. “Random variable” just means it’s something we can measure, and its value can vary from one instance to another.

Now, when we say X is normally distributed, we’re basically saying that the values of X tend to cluster around a central point, and the further away we get from that central point, the fewer observations we find. Think about it: most people are of average height, right? You don’t find heaps and heaps of people who are only three feet tall, nor do you find tons and tons of giants who are ten feet tall. The vast majority fall somewhere in the middle. Same with test scores: a lot of students will get scores around the class average, fewer will get super high scores, and even fewer will get abysmal scores. Makes sense, doesn’t it?

This bell-shaped curve, this iconic representation of the normal distribution, is actually defined by two key parameters. And these two numbers are the superstars of the whole show. They dictate the exact shape and location of our bell curve.

The first superstar is the mean, often represented by the Greek letter μ (mu). This is our central point, the peak of the bell. It’s the average value we expect for our random variable. So, for Brenda’s tomatoes, μ would be the average weight she’s aiming for, or what historical data suggests is the typical weight. It tells us where the distribution is centered. It’s like the bullseye on a target.

The second superstar, and often the one that gets a bit more attention because it describes the spread, is the standard deviation, denoted by the Greek letter σ (sigma). Now, this is where things get really interesting. The standard deviation tells us how spread out the data is. A small standard deviation means that most of the data points are clustered very close to the mean. Our bell curve would be tall and narrow, like a sharp peak. A large standard deviation, on the other hand, means the data is more scattered, with many points further away from the mean. Our bell curve would be short and wide, spread out like a gentle rolling hill. It’s the measure of variability.

So, if Brenda’s tomatoes have a normal distribution with a mean of, say, 200 grams (μ = 200) and a standard deviation of 20 grams (σ = 20), that tells us a whole lot more than just the average weight. It means that most of her tomatoes will likely weigh somewhere between 180 and 220 grams (one standard deviation away from the mean in either direction). And if we go out two standard deviations? That covers about 95% of her tomatoes! So, most of them will be between 160 and 240 grams. See how powerful that is? We can start making predictions!

This is why assuming a normal distribution is such a big deal in statistics and in so many fields. It gives us a powerful tool for understanding and predicting the behavior of data that seems, on the surface, to be a bit chaotic. Think about it: if you’re a manufacturer making lightbulbs, you want to know, on average, how long they’ll last. But you also need to know the range of lifespans. You don’t want too many bulbs burning out way too early, nor do you want them all lasting exactly the same amount of time (that would be… weirdly unnerving, wouldn’t it?). The normal distribution helps you model this variability.

Let’s dive a little deeper into the properties of this magical bell curve, because there are some really neat tricks up its sleeve.

The 68-95-99.7 Rule (or, The Empirical Rule)

This is probably the most famous takeaway from assuming a normal distribution. It’s super handy and easy to remember. It states that for a normal distribution:

- Approximately 68% of the data will fall within one standard deviation of the mean (μ ± σ).

- Approximately 95% of the data will fall within two standard deviations of the mean (μ ± 2σ).

- Approximately 99.7% of the data will fall within three standard deviations of the mean (μ ± 3σ).

Seriously, this rule is like a cheat code. If you know the mean and standard deviation of a normally distributed dataset, you can instantly estimate the proportion of data that falls within certain ranges. It’s that straightforward. So, back to Brenda’s tomatoes (I’m really invested now). If μ = 200g and σ = 20g:

- About 68% of her tomatoes will weigh between 180g and 220g.

- About 95% will weigh between 160g and 240g.

- And almost all (99.7%) will weigh between 140g and 260g.

This means any tomato weighing, say, 100g or 300g would be extremely rare. Like, Brenda might want to check that specific plant for mutant tomato tendencies.

This rule is incredibly useful for understanding the typicality of an observation. If you’re looking at a data point that’s more than three standard deviations away from the mean, you’re likely looking at an outlier, something unusual. In real life, this could be a person who is exceptionally tall or short, a student who scored way above or below average on a test, or, yes, a ridiculously oversized or undersized tomato.

But it's not just about describing the spread. The normal distribution is also fundamental to a lot of statistical tests and methods. Why? Because many statistical procedures assume that the data they are analyzing comes from a normal distribution. If that assumption holds true, then the results of these tests are more reliable and interpretable.

Think about hypothesis testing. Let’s say you’re a teacher and you’ve implemented a new teaching method. You want to know if it actually improves student scores. You might compare the scores of students taught with the new method to those taught with the old method. If you assume that the scores for each group are normally distributed, you can use statistical tests (like a t-test, if you’re feeling adventurous) to determine if the difference in average scores is statistically significant, or if it could have just happened by chance. The normal distribution is the bedrock upon which these tests are built.

And what about confidence intervals? These are phrases you hear a lot in surveys and research: "The margin of error is plus or minus 3 percentage points." That "plus or minus" bit? That’s often derived from the properties of the normal distribution! A confidence interval gives you a range of values within which the true population parameter (like the true average height of all people, not just the ones you sampled) is likely to lie, with a certain level of confidence. The normal distribution allows us to calculate those ranges accurately.

It’s also worth noting that even when data isn’t perfectly normally distributed, the normal distribution can still be a pretty good approximation, especially if your sample size is large enough. This is thanks to something called the Central Limit Theorem. Oh boy, another theorem! But this one is a game-changer. It essentially says that if you take many random samples from any population (even one that isn’t normally distributed), and you calculate the mean of each sample, the distribution of those sample means will tend to be normally distributed. How cool is that? It means that even if your individual data points are all over the place, the averages of groups of those points will often behave like a normal distribution. This is a huge reason why the normal distribution is so ubiquitous in statistics.

So, where does this leave us? Well, it means that when we see data that seems to follow a bell curve pattern, or when we’re using statistical methods that rely on normality, we can be pretty confident in our interpretations. It’s like having a map and a compass when navigating the sometimes-murky waters of data.

Of course, it’s not always a perfect fit. Real-world data can be messy. You might have data that's skewed (more on one side than the other) or has multiple peaks (bimodal or multimodal). And in those cases, assuming a normal distribution might lead you astray. That's why it's always a good idea to check if your data actually looks normal before you go making grand pronouncements. You can do this by looking at histograms, Q-Q plots, or performing statistical tests for normality. It’s all about being a good data detective!

But for those times when X is normally distributed, or when the Central Limit Theorem gives us a helping hand, it’s a beautiful thing. It simplifies complex patterns, allows for powerful predictions, and forms the foundation of so much statistical inference. So, the next time you hear someone talking about averages, spare a thought for the bell curve. It’s probably working behind the scenes, making sense of it all.

And Brenda? I told her about the normal distribution and her tomatoes. She looked at me with wide eyes, then grabbed her spreadsheet. "So," she said, a mischievous grin spreading across her face, "you're telling me there's a mathematical reason why some tomatoes are bound to be duds?" I just smiled. Sometimes, the most profound truths are found in the most humble of vegetables, and the most elegant of mathematical shapes.